See the

original puzzleThis was a tricky one, if I do say so myself! Question A is a puzzle classic (though the

Futurama references are my own), while Universe 1 and the Tale of Interest are my own variations.

First, recall that there are four possibilities when two coins are flipped. The first coin has two possibilities (called H and T for Heads and Tails) and the second has two possibilities. 2x2=4. Those possibilities can be represented with the following:

HH

HT

TH

TT

But wait! Aren't both coins flipped at the same time rather than one after the other? Yes. As a result, we cannot tell the difference between HT and TH. However, each of these possibilities was assigned a probability of 1/4, and if we combine them together, it will have a total probability of 1/2. The remaining three possibilities will no longer have equal probabilities, as shown below:

HH 1/4

HT 1/2

TT 1/4

Now let's examine what happened in each universe. Details are key!

In

Universe A, Fry asks whether at least one coin is heads. Leela says yes. Therefore, we can eliminate the TT possibility. What's left?

HH 1/4

HT 1/2

But the probabilities don't sum up to 1! The numbers are nonsensical. What do we do?

Consider a simpler case. If I flip one coin, it has a 1/2 probability of being heads. However, if I tell you the

actual result was heads, the probability changes from 1/2 to 1. The same concept applies here. After we've eliminated a possibility, we have to multiply all the other probabilities by a number (here, that number is 4/3) such that the total probability is 1 again.

HH 1/3

HT 2/3

Conclusion: There is a 1/3 chance the both coins are heads.

Universe 1 is nearly the same as Universe A, but with a key difference! Fry simply asks what the coins are, but Leela does not simply answer yes or no. She instead says that, "At least one coin is tails." She just as easily could have said, "At least one coin is heads." How did she decide between these two statements? Perhaps you cannot prove this, but the most

reasonable way for Leela to decide is to look at the coins and pick one of them.

Now we've got to break up the 3 possibilities into 6 possibilities, since Leela can either pick the first one or the second one. It doesn't matter that Leela doesn't know which is first or second, nor does it matter whether the first and second happen to have the same face showing. Here are the possibilities:

HH; Leela picks H 1/8

HH; Leela picks H 1/8

HT; Leela picks H 1/4

HT; Leela picks T 1/4

TT; Leela picks T 1/8

TT; Leela picks T 1/8

We know that Leela picked tails, so let's reduce the possibilities, and then make sure the probabilities add up to 1.

HT; Leela picks T 1/2

TT; Leela picks T 1/4

TT; Leela picks T 1/4

Conclusion: There is a 1/2 chance that both coins are tails.

In the

Tale of Interest, we are given additional information that the two universes are two sides of the same coin, so to speak. So is the probability 1/2 or 1/3? I admit that I wasn't sure myself at first. But we can divide up the possibilities into 6, just like before, and find out. The following list will be slightly more complicated:

Universe 1: TT; Universe A: HH; Leela picks H 1/8

Universe 1: TT; Universe A: HH; Leela picks H 1/8

Universe 1: TH; Universe A: HT; Leela picks H 1/4

Universe 1: TH; Universe A: HT; Leela picks T 1/4

Universe 1: HH; Universe A: TT; Leela picks T 1/8

Universe 1: HH; Universe A: TT; Leela picks T 1/8

Based on our information from Universe 1, we can eliminate the first two possibilities. Based on our information from Universe A, we can eliminate the first three possibilities. Here's what we have left:

Universe 1: TH; Universe A: HT; Leela picks T 1/2

Universe 1: HH; Universe A: TT; Leela picks T 1/4

Universe 1: HH; Universe A: TT; Leela picks T 1/4

Conclusion: There is a 1/2 chance that both coins are heads in Universe 1.

One question I anticipate is, "How can the probability change between Universe A and the Tale of Interest? Aren't they asking the same question?" It's the same question, but there is additional information given in the Tale of Interest. Leela in Universe A has no idea what Leela in Universe 1 is up to. New information can change probabilities. It's just like when I tell you what the

actual result of a coin flip is.

Ok, so I've gone through a lot of detail here, but my experience with probability puzzles has taught me that many people will look at this and still think it's intuitively wrong. Oh, the flaws of intuition! Please ask, and I'll attempt to convince your intuition. But you must realize that it's the math that has authority, not intuition.

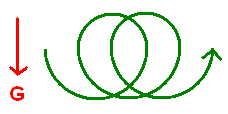

It is an image of a top. My program makes it spin! See, I am trying to model an extremely complicated type of motion called "rotation". It's so complicated, I don't know if I should even try to explain it. It involves vectors, tensors, matrices, and differential equations...

It is an image of a top. My program makes it spin! See, I am trying to model an extremely complicated type of motion called "rotation". It's so complicated, I don't know if I should even try to explain it. It involves vectors, tensors, matrices, and differential equations...